Working on the next big thing in AI

Excellence, diversity and impact

We thoroughly understand the latest AI techniques and application domains. Scientific excellence, engineering ingenuity and the diversity of people and perspectives define the way we work. We are open to collaboration and always strive for real-world impact.

Get to know our research and meet the people behind it!

Latest news

Outstanding work deserves credit. We want to highlight the extraordinary work of our teachers and put their effort in the spotlight. Each semester, the AI Center will announce the "Outstanding Teaching Assistant Award" which comes with a prize money of 15 000 CZK. The winner will be awarded in April 2024.

Industry Collaboration

Horizon Europe project for fair AI algorithms supported by the EU with 3,8 million Euros. Imperial College London, the Israeli Institute of Technology Technion and the National and Capodistrian University of Athens as well as partners from the industry collaborate on developing explainable and transparent algorithms.

Partner up with AIC

We help companies advance their AI technologies through consulting, piloting and contractual research. Joint research lab is one of the possible modes of collaboration which gets us excited the most!

Work with us!

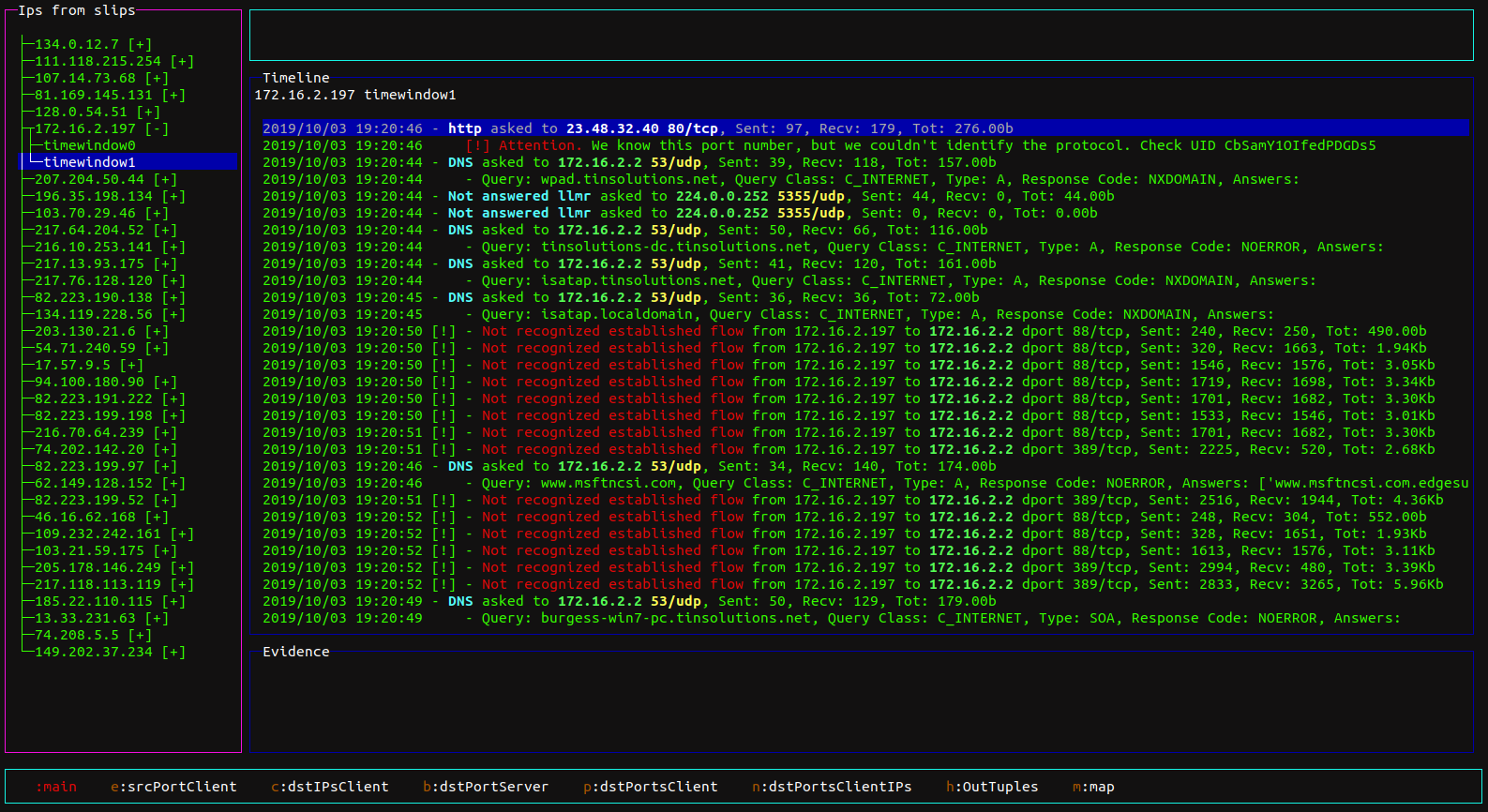

I am fascinated by the fact that through high-quality security research we are actually protecting civil society.